But… what it lacks in features, it makes up for by perfecting the the existing ones. It’s quite different than the others in this list, as it has far fewer features. Its biggest drawback is that Xfolders is very similar, but free.įileBrowse strikes me as a ‘love it or hate it’ File Manager. The built in FTP client is helpful, the built-in preview works well and mix of Mac native and Norton-Commander styles blend really well.

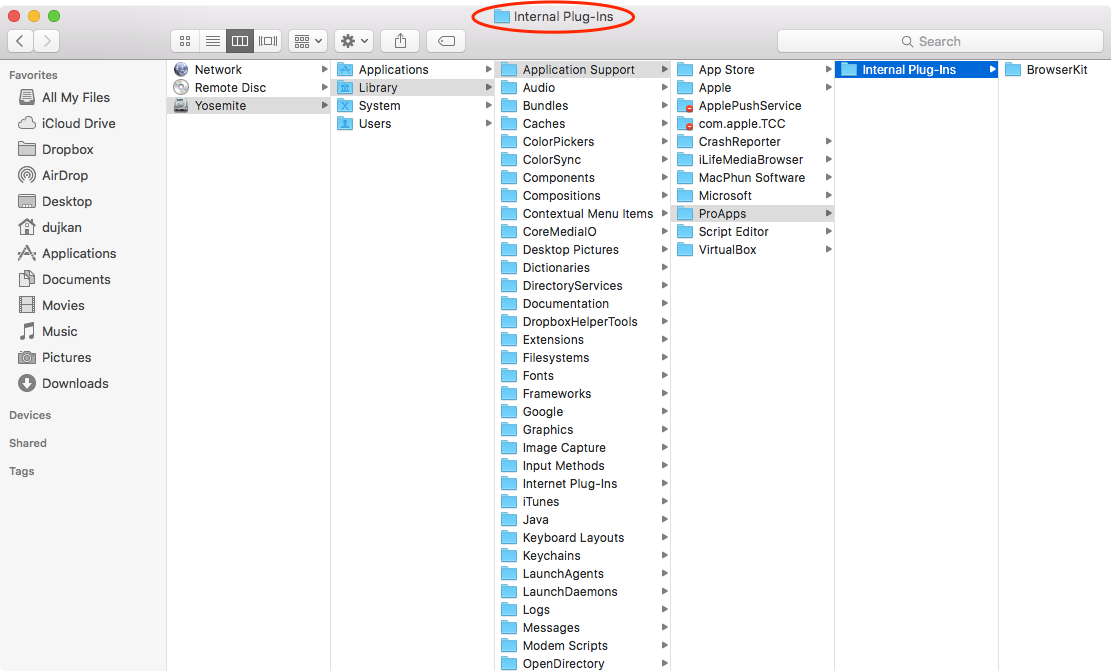

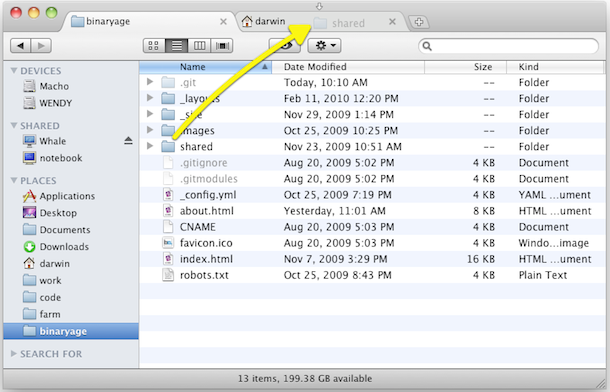

Compare Directories, wildcard selectionĭisk Order is pretty nice.Two file selection modes (Mac native and Norton-Commander-like).Very usable interface (Eject buttons by volume names and FTP sessions, customizable toolbar, Drives panel).Plug-in architecture (Terminal window, Burn CDs, Zip, Unzip, Untar etc.).Archives support (tar, gz, tgz, bz, bz2, tbz, zip).Built-in FTP-client (create, upload, download, CHMOD, transfer mode, encodings, viewing files and so on…).Built-in Viewer (viewing html, rtf, mov, mp3, jpg, gif, tiff etc.).Requirements: OSX 10.3 and higher, there is an older version for 10.2 that won’t be updated and has fewer features It does share the same “3 view” option as Finder (list, columns, icons), but the addition of tabs helps make for a far less cluttered desktop.īeing the 2nd most expensive File Manager reviewed, it’s a good thing that there’s a 21 day fully-functional demo available. It uses more system resources than most of the other File Managers outlined in this article, and isn’t above the occasional crash. The Path Finder menu item/button is also a helpful feature (see image below). It has many, many features that you won’t find in Finder (top of the list would be tabs, desktop icon changes and the ‘drop stack’).

Path Finder is by far the most “popular” alternative to Finder for OS X. Improved Japanese, French, Danish and German localizations.Added ability to disable the Finder’s desktop (see the General preferences).Improved the Size Browser’s interface (now a regular window with toolbar).

0 Comments

This is because that information is captured by the “summarization” component of the memory, despite being missed by the “buffer window” component. When applying this to our earlier conversation, we can set max_token_limit to a small number and yet the LLM can remember our earlier “aim”. Llm =llm, memory =ConversationSummaryBufferMemory( We use it like so:Ĭonversation_sum_bufw = ConversationChain( Meaning that we only keep a given number of past interactions before “forgetting” them. The ConversationBufferWindowMemory acts in the same way as our earlier “buffer memory” but adds a window to the memory. After a certain amount of time, we still exceed context window limits. Yet, it is still fundamentally limited by token limits. Relatively straightforward implementation, intuitively simple to understandĪlso requires token usage for the summarization LLM this increases costs (but does not limit conversation length)Ĭonversation summarization is a good approach for cases where long conversations are expected.

Memorization of the conversation history is wholly reliant on the summarization ability of the intermediate summarization LLM Shortens the number of tokens for long conversations.Ĭan result in higher token usage for smaller conversations We can summarize the pros and cons of ConversationSummaryMemory as follows: Pros In contrast, the buffer memory continues to grow linearly with the number of tokens in the chat. However, as the conversation progresses, the summarization approach grows more slowly. As shown above, the summary memory initially uses far more tokens. summary memory as the number of interactions (x-axis) increases.įor longer conversations, yes. Token count (y-axis) for the buffer memory vs. The number of tokens being used for this conversation is greater than when using the ConversationBufferMemory, so is there any advantage to using ConversationSummmaryMemory over the buffer memory? The human then asked what their aim was again, to which the AI responded that their aim was to explore the potential of integrating Large Language Models with external knowledge. Additionally, the model could be trained on a combination of these data sources to provide a more comprehensive understanding of the context.

The human then asked which data source types could be used to give context to the model, to which the AI responded that there are many different types of data sources that could be used, such as structured data sources, unstructured data sources, or external APIs. The human asked the AI to think of different possibilities, and the AI suggested three options: using the large language model to generate a set of candidate answers and then using external knowledge to filter out the most relevant answers, score and rank the answers, or refine the answers. The human expressed interest in exploring the potential of integrating Large Language Models with external knowledge, to which the AI responded positively and asked for more information.

The human greeted the AI with a good morning, to which the AI responded with a good morning and asked how it could help. We initialize the ConversationChain with the summary memory like so: In the context of (/learn/langchain-intro/, they are all built on top of the ConversationChain.įollowing the initial prompt, we see two parameters parameter. There are several ways that we can implement conversational memory. Conversational memory allows us to do that. There are many applications where remembering previous interactions is very important, such as chatbots. The only thing that exists for a stateless agent is the current input, nothing else. By default, LLMs are stateless - meaning each incoming query is processed independently of other interactions. The memory allows a Large Language Model (LLM) to remember previous interactions with the user. Without conversational memory (right), the LLM cannot respond using knowledge of previous interactions. The blue boxes are user prompts and in grey are the LLMs responses. The LLM with and without conversational memory. It enables a coherent conversation, and without it, every query would be treated as an entirely independent input without considering past interactions. Conversational Memory for LLMs with LangchainĬonversational memory is how a chatbot can respond to multiple queries in a chat-like manner.

If you don’t already have a custom ID, you can quickly change from a number to something much easier to remember within your profile settings. Of course, you don’t have to just stick with a boring ID number. You can search using your custom URL to find your ID number. Using Steam ID Finder – Search for your Steam ID64 using this lookup tool.With URL view – Finding your Steam ID can be as simple as turning on the URL view in the desktop client or visiting your profile in any supported browser.However, this is an incredibly easy option. If you don’t have a multiplayer Valve game, you’ll need to choose another option. Using a multiplayer Valve game – This only applies to certain games.Via your account’s details – A easy method that digs through Steam’s settings.Each one gives the same result, but you may find one is easier than the others. Learning how to find your Steam ID isn’t really difficult. Tip: You can share your Steam games with family and friends without them needing to buy their own copy. But, like your Steam ID, your username can’t be changed. It’s a private name, contrasting with your profile name. This is the unique name you use to log into Steam. In addition to a profile name and Steam ID, you also have a username or account name. There’s also one more thing to keep in mind. Steam ID is unique, but the profile name doesn’t have to be.Profile name can be changed, but the Steam ID can’t.The two most significant differences between your profile name and Steam ID can be summarized as follows: You can also delete past names if you want. In fact, Steam even keeps a list of previous names to help other users find you. You are free to change your profile name at any given time.  |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed